Why the hell can’t we just have both? One of the biggest problems with smart speakers and voice assistants is that they’re so damn stupid so often. If A.I. were to become smart enough to be what the current assistants/speakers aren’t, surely that would drive device sales and engagement astronomically higher right?

That would be the goal. The tricky part is matching intents that align with some API integration to whatever psychobabble the LLM spits out.

In other words, the LLM is just predicting the next word, but how do you know when to take an action like turning on the lights, ordering a pizza, setting a timer, etc. The way that was done with Alexa needs to be adapted to fit with the way LLMs work.

Eh just ask the LLM to format requests in a way that can be parsed to a function.

Its pretty trivial to get an llm to do that.

in fact it’s literally the basis for the “tools” functionality in the new openai/chatgpt stuff!

that “browse the web”, “execute code”, etc is all the LLM formatting things in a specific way

Microsoft seems to be attempting this with the new Copilot in Windows. You can ask it to open applications, etc., and also chat with it. But it is still pretty clunky when it comes to the assistant part (e.g. I asked it to open my power settings and after a bit of to and fro it managed to open the Settings app, after which I had to find the power settings for myself). And they’re planning to charge for it, starting at an outrageous $30 per month. I just don’t see that it’s worth that to the average user.

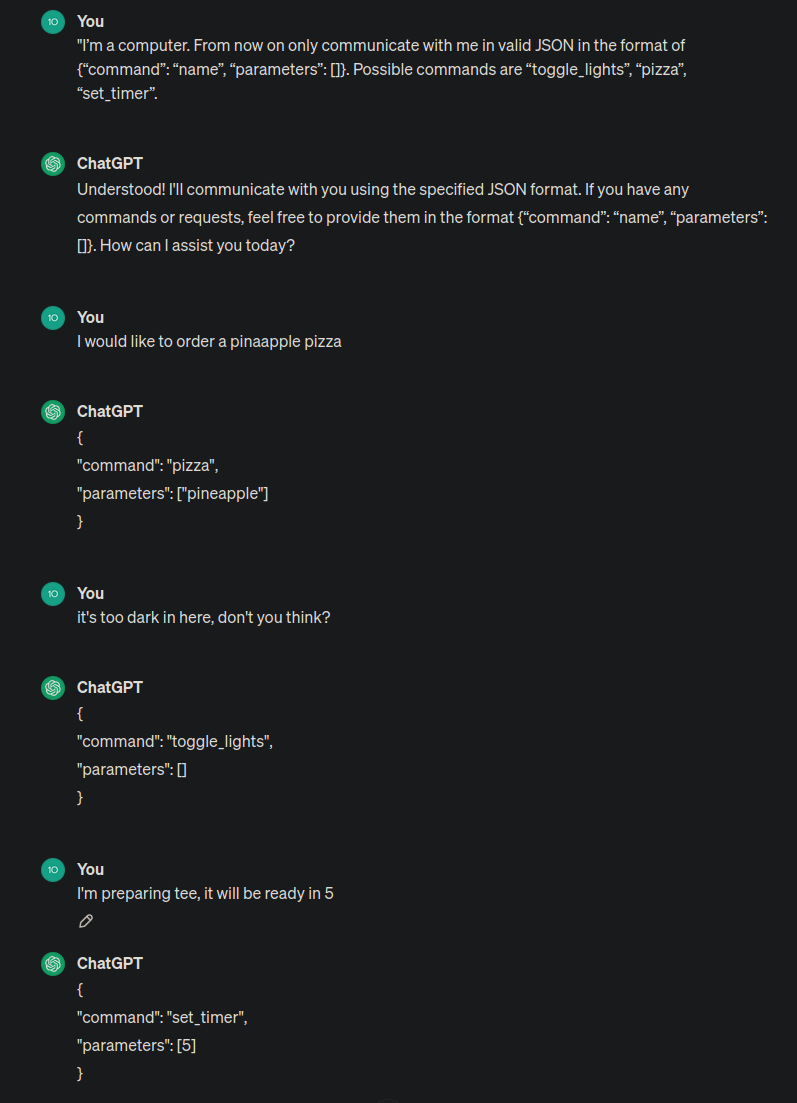

It’s actually fairly easy. "I’m a computer. From now on only communicate with me in valid JSON in the format of {“command”: “name”, “parameters”: []}. Possible commands are “toggle_lights”, “pizza”, “set_timer”. And so on and so on. Current models are remarkably good at responding with valid JSON, I didn’t have any issues with that. They will still hallucinate about details (like what it would do if you try to set up a timer for pizza?) but I’m sure you can train those models to address those issues. I was thinking about doing a OpenAI/google assistant bridge myself for spotify. Like “Play me that Michael Jackson song with that videoclip with monsters”. Current assistant can’t handle that but you can just ask chatGPT for the name of the song and then pass it to the assistant. This is what they have to do but on a bigger scale.

I just tried the new OpenAI voice conversation feature and thought about this too. It’s everything I had hoped and dreamed that voice assistants would be when they first came out. It’s really surprising that the ones from huge tech companies suck so much.

The tech to make them as good as what you just tried only came about more recently.

Voice assistants, particularly Siri, are structured in a VERY different way.

Because the elephant in the room is that AI isn’t actually AI but is a huge database of internet and creative content combined with a language processing tool that takes its best guess at how to respond with that information to you.

AI today is just linear algorithms with bigger faster databases.

We can’t have both because Alexa’s job is not to give customers a good experience, it’s to make them comfortable re-ordering Tide Pods with their voice.

Even households with Prime and an eco in every room don’t trust that removed with their credit card. Making her smart won’t fix that; she’s a failure.

Damn voice assistants are going to be our memory of the 2010s like car phones were to the 80s.

Pretty much everyone I know uses then daily.

Me: Play Whitehorse the band

Google Assistant: Playing Whitehorse the song by some other guy

Me: No, play Whitehorse the Canadian band

Google Assistant: Playing Whitehorse the album by a third guy

Me: Play Achilles Desire

Google Asistant: Playing WhitehorseMe: Play Tu vuò fà l’americano on Spotify

Google Assistant: !?!?!?!?Me: Play Laisse tomber les filles

Google Assistant: !?!?!?Me: Play Les Cowboys Fringants on Spotify

Google Assistant: !?

Me: Play Les Cowboys F R I N G A N T S

Google Assistant: Playing Les Cowboys FringantsI only ever use those junky voice assistants when driving, and they are useless half the time

Me: Turn on the kitchen

Alexa: I’m sorry, what device?

Me: KITCHEEEEEEN

It used to work flawlessly for every room in my house, and then a few months ago it just started doing that stupid “what device?” shit.

Not only are voice assistants not improving, they’re actively getting worse.

70% of the time, finding my phone and doing it myself would have been faster than arguing with a dumb speaker. I find them really good for

- setting timers

- playing generic music

- pausing other devices that are playing

- simple questions like current temperature or forecast

When I don’t already have my phone in hand.

You put your phone down?

👌👍

I don’t know anyone who uses them at all.

“Alexa, add bananas”

“Alexa, 3 minutes”

“Alexa, add 30 seconds”

I think that’s just about everything I’ve ever used it for.

This is better with kids. My niece figured it out and often spoke to Alexa:

Niece: Alexa, add farts and pepperoni pizzas to the grocery list. Niece: Alexa, play baby shark on the bedroom speaker. Niece: Alexa, remind me to kiss my butt in 10 minutes. (Leaves room, her mom was there a few minutes later, in time for the reminder.)

Etc…

When you leave an Alexa enabled echo sitting around 4 to 8 year olds, you get some interesting requests… and entertainment.

True, there’s that :)

And of course there are those times that Alexa completely misunderstands. Neither my wife nor I know how it happened, but some months back we discovered “blow job” on our list.

deleted by creator

Every time I try anything other than the most basic things, like setting a timer, it just fails miserably. It would be so useful for hands free operation in the car but even things like calling or navigating are broken beyond belief.

Talking faster is one of the more helpful hints I ever got.

But never try to get your car to play phonk, it’ll just play you some funk. Which is cool too, but not what I was going for.

I’ll try it but honestly at this point I don’t see any hope for it anymore, when the difference between the name Karolina and Carolina is enough to confuse it. Like, I give it first and last name and it says it can’t find it even though it heard the name just fine but decided its written with a C instead of a K, so it doesn’t exist in my contacts.

Sounds like a personal problem. Maybe language. It works very well for most of my peers. I rarely have issues with friends and family.

Right, you definitely don’t have friends.

Whatever you need to tell yourself.

This is the best summary I could come up with:

Amazon is going through yet another round of layoffs, reports Computerworld, and once again the company’s devices-and-services division appears to be bearing the brunt of it.

The layoffs will primarily affect the team working on Alexa, the Amazon voice assistant that drives the company’s Echo smart speakers and other products.

“Several hundred roles are impacted,” the company said in a statement, “a relatively small percentage of the total number of people in the Devices business who are building great experiences for our customers.”

Amazon hasn’t released an AI-powered version of Alexa yet, but it showed “an early preview” of its efforts in September, “based on a new large language model that’s been custom-built and specifically optimized for voice interactions.”

But the hardware is sold at cost, and people interact with Alexa mainly to play music or check the weather, not to spend money on Amazon or anywhere else.

It has been a tough year for Amazon’s devices division, which has already borne a large share of the 27,000 layoffs that the company has announced in the last 12 months.

The original article contains 460 words, the summary contains 179 words. Saved 61%. I’m a bot and I’m open source!