cross-posted from: https://lemmy.ml/post/2811405

"We view this moment of hype around generative AI as dangerous. There is a pack mentality in rushing to invest in these tools, while overlooking the fact that they threaten workers and impact consumers by creating lesser quality products and allowing more erroneous outputs. For example, earlier this year America’s National Eating Disorders Association fired helpline workers and attempted to replace them with a chatbot. The bot was then shut down after its responses actively encouraged disordered eating behaviors. "

The company my workplace partners with for our IT Helpbot has given a lot of insight into how their LLM system works, and the big thing about it is the langchain and the checks and balances.

Like, you ask “how fix printer error” and instead of hallucinating a response, it first queries our help articles for the correct information and finds the correct snippet to include, along with a link to the source. It also checks whether the user has access to that source material, and if not then it won’t return it but it will proactively tell the user that and ask whether the user wants to open a help request for access to that info. (We haven’t implemented a lot of this stuff in our own workplace because it requires so many coordinating integrations - this is a best case scenario.)

Then, before sending, a second AI comes in and double-checks whether the response is going to be truthful and factual and non-toxic, or else the response has to be regenerated.

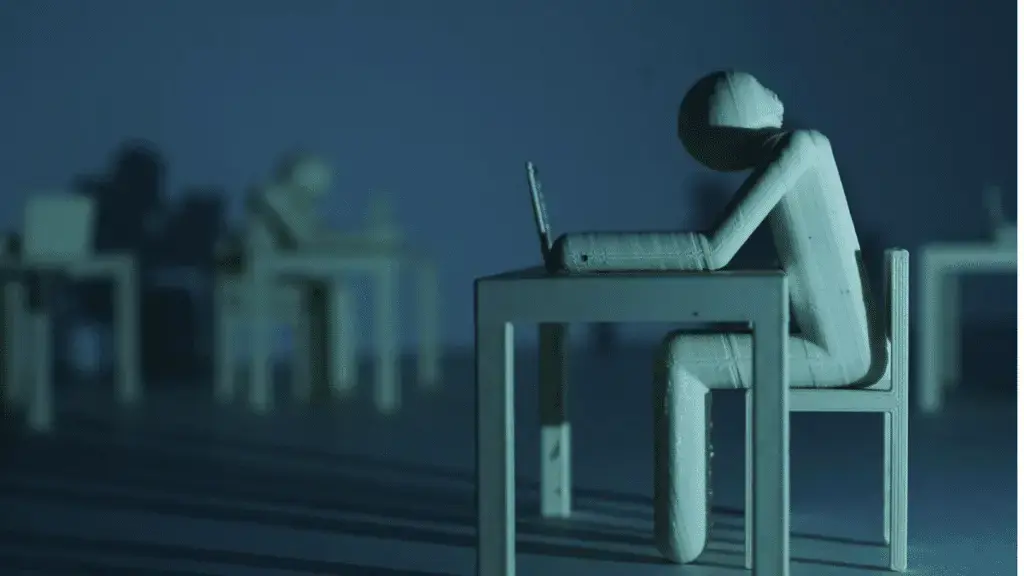

This stuff is incredibly powerful but it’s not as simple as “train an LLM and release it on the world” - you need to really think through it as one tool in your toolbox, and how it will interact with those other tools. The only people it’s good at replacing, at least in it’s current state, is L1 Help Desk who only read and respond from SOPs. Otherwise, Copilots can be a good way to assist in coding for example (ChatGPT has given me great insight into my PHP errors for example) but it certainly can’t do the actual work for you.

I wouldn’t say the hype is dangerous or overblown, because this stuff can be absolutely transformative if applied correctly, but executives see dollar signs and think they can replace real thinking humans and then they suffer the consequences, because they didn’t understand the very initiative they directed.

That drivel reads like something a chatbot would write or maybe not, since that would probably more informative and creative. Just repeats the same old nonsense that went through the press a dozen times and is seriously lacking in substance.

the harms of generative AI, including racism, sexism

Has this person even bothered to touch any of the popular LLMs? They are all censored to the n’th degree. Half the time they’ll refuse to summarize StarWars. Whole groups of topics are completely impossible to discuss with an LLM. Yes, you can run a local LLM with some of the censorship removed or custom train one, but that’s a lot of extra effort. The models by default are extremely neutered.

For example, when a lawyer requested precedent case law, the tool made up cases out of whole cloth.

Lawyer uses tool for task tool was never designed for and is fundamentally unable to be good at by design. Surprised Pikachu face

In reality, these systems do not understand anything.

Utterly baseless claims without even an example are my favorite.

In another act of resistance, artists and computer scientists created Glaze,

And promotes snake oil.